(Human → Machine → Institution Amplification Loop, Failure‑Mode Map, Containment Model, Shutdown Criteria, Governance Model)

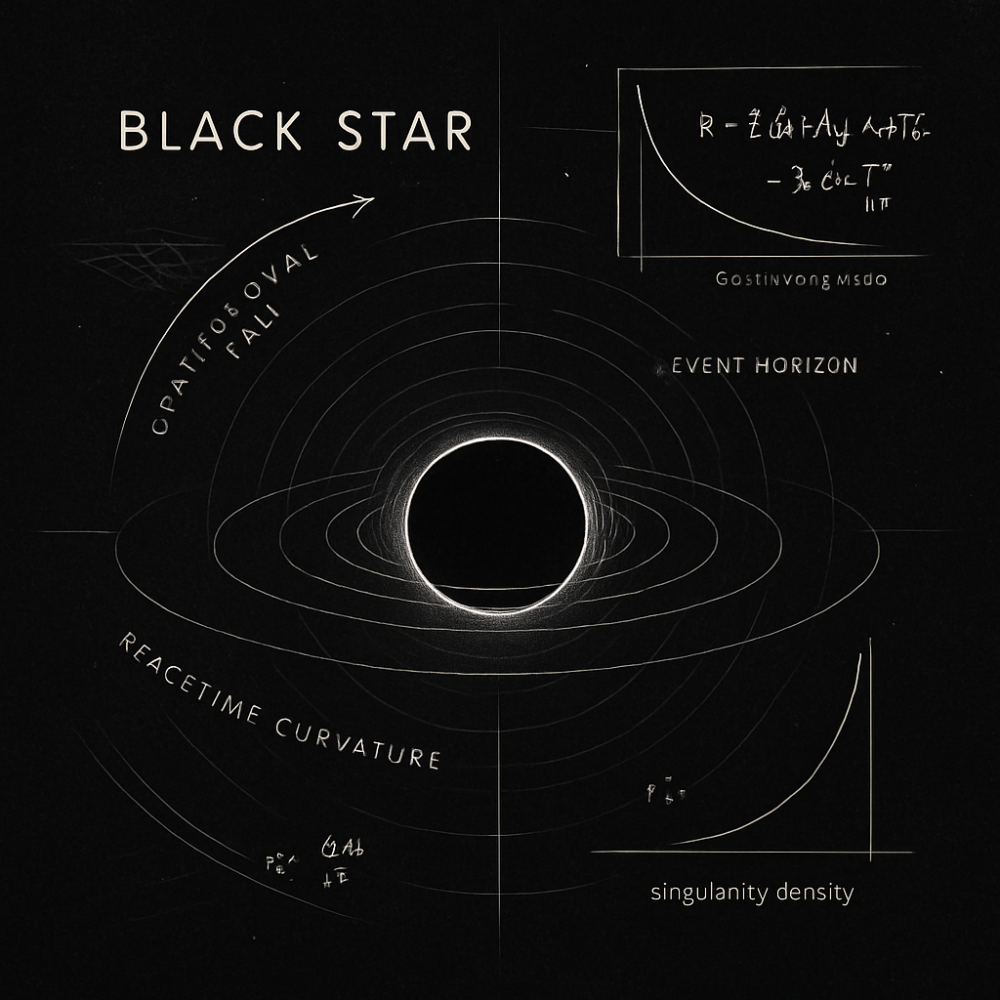

Human → Machine → Institution Amplification Loop

The Black Star Institute Human – Machine – Institution Amplification Loop describes how individual behaviors scale into machine outputs, and how machine outputs scale into institutional consequences.

- Human Input Layer — cognitive shortcuts, incentives, emotional states, and environmental pressures that shape initial behavior.

- Machine Interpretation Layer — model inference, ranking systems, feedback loops, and optimization functions that amplify human signals.

- Institutional Adoption Layer — policy, governance, automation, and operational scaling that turn machine outputs into systemic behavior.

- Amplification Feedback — the loop where institutional outputs reshape human behavior, completing the cycle.

This loop is the core of BSI’s doctrine: systems don’t fail at the machine layer — they fail at the interface between layers.

Failure‑Mode Map

Black Star Institute categorizes failures into five classes:

- Type‑I: Misalignment — the system optimizes for the wrong objective.

- Type‑II: Over‑Amplification — small human signals become large institutional consequences.

- Type‑III: Context Collapse — the system applies rules outside their intended domain.

- Type‑IV: Governance Drift — institutions adopt machine outputs without understanding their constraints.

- Type‑V: Behavioral Environment Construction — the system creates an environment that shapes human behavior in unintended ways.

Containment Model

BSI’s containment model focuses on environmental stabilization, not algorithmic tinkering.

- Boundary Conditions — define what the system is not allowed to do.

- Rate Limiters — prevent runaway amplification.

- Cross‑Layer Verification — human → machine → institution consistency checks.

- Environmental Safeguards — stabilize the behavioral environment around the system.

- Rollback Paths — ensure reversibility when the system drifts.

Shutdown Criteria

Black Star Institute defines shutdown not as failure, but as responsible cessation.

- Loss of Observability — when the system’s internal state cannot be reliably inspected.

- Loss of Controllability — when human operators cannot meaningfully steer outcomes.

- Loss of Boundary Integrity — when the system escapes its intended domain.

- Cascading Institutional Harm — when machine outputs propagate into governance failures.

- Environmental Destabilization — when the system alters human behavior in harmful ways.

Governance Model

Black Star Institute governance is built on three pillars:

- Transparency of Mechanism — operators must understand what the system is optimizing for.

- Accountability of Deployment — institutions must own the consequences of machine‑scaled decisions.

- Stability of Environment — the system must not create behavioral environments that distort human judgment.

By Hunter Storm

Founder, Black Star Institute (BSI)

CISO | Advisory Board Member | SOC Black Ops Team | Systems Architect | QED-C TAC Relationship Leader | Originator of Human-Layer Security

© 2026 Hunter Storm. All rights reserved.

The Black Star Institute (BSI) is the first and only boundary‑systems institute in the world — a sovereign, independent analytical institution that integrates the capabilities of a think tank, research lab, consultancy, and policy shop without inheriting their structural limitations or vulnerabilities. BSI is a boundary-systems institute — an entity that operates across human, machine, and institutional layers to diagnose systemic failure and define governance doctrine.

It is an independent research and governance organization focused on systemic‑risk analysis, automation failures, and human‑layer security. BSI examines how institutions, technologies, and decision systems break under real‑world conditions, producing artifacts that clarify failure modes, strengthen governance, and prevent recurrence.

BSI’s work integrates over three decades of cross‑sector experience in artificial intelligence (AI), cybersecurity, post-quantum cryptography (PQC), quantum, national security, critical‑infrastructure resilience, and emerging and disruptive technologies (EDT) governance. Its research emphasizes authorship integrity, structural clarity, and practitioner‑driven analysis grounded in operational reality rather than narrative or theory.

Through the Black Star Institute, Hunter Storm publishes institutional frameworks, case studies, and governance artifacts that support organizations navigating complex technological, regulatory, and hybrid‑threat environments.

Explore Black Star Institute (BSI)

About BSI

Identity, mandate, institutional posture, and mission.

Case Studies

Failures in automation, compliance, and governance.

Advisory Work

Engagement scope, methods, and governance approach.

Doctrine

Principles guiding governance, analysis, and engagement.

Publications

Essays, briefings, educational materials, and institutional artifacts.

Contact

Institutional channels for inquiry and collaboration.

Lexicon

Shared structural language for clarity and precision.

Frameworks

Operational models for analysis, diagnosis, and decision-making.